Dear AEA365 readers,

I’m Sara Vaca, independent consultant and frequent Saturday contributor and I’m thrilled because one of my present commitments is working again with UNFPA, with the Eastern Europe and Central Asia Regional Office (fascinating region), facilitating their first Developmental Evaluation (and mine!), and I am fascinated by how this approach I’ve heard and read so much about is being different from what I’ve done in the past.

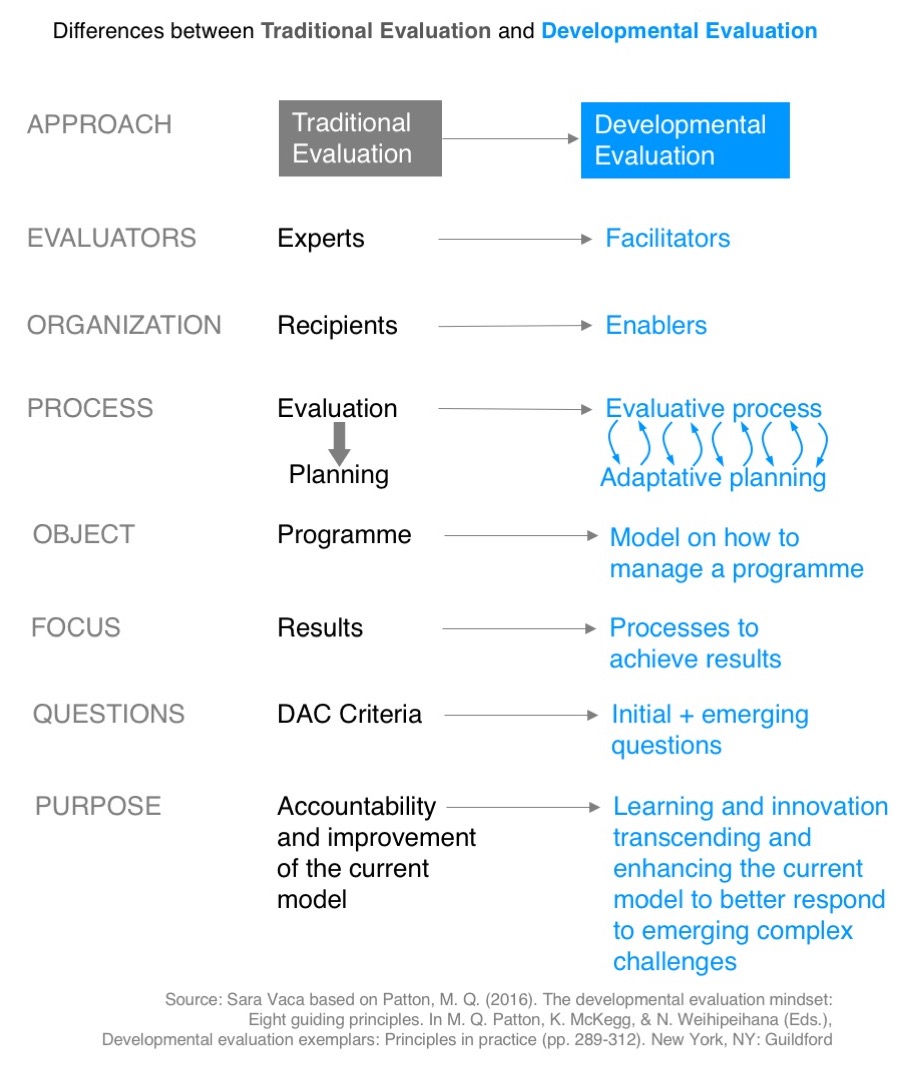

Lesson Learned 1: I’m used to do traditional evaluations and this developmental approach brings many shifts:

- The management needs to be committed with innovation.

- The evaluators will act as facilitators of the process.

- The expected result is the co-creation of a social innovation rather than a set of recommendations

- It is focused on learning.

- It involves moving from individual to collective-sense-making (shout out to EvalCafe podcast).

In fact, rather than an evaluation, I would call it a collaboration with evaluative lenses!

Lesson Learned 2:

Developmental Evaluation is really taking me out of my comfort zone. I realize that I miss some of the pillars I used to support my work with:

- Not having evaluation questions, the equivalent of the “north” in an evaluation (compass), is a game-changer and a big shift in the way you internally check if what you are doing is the right thing. I am fine not having them – I just miss the way they guide the methodology and technical choices in advance.

- I also miss the clear timeline, where you know when and where (well now, it is always home) each of the phases will take place. We still have some milestones defined down the line, but the whole road is less clear.

- Finally, while everybody knows what an evaluation is, it is not so easy to explain to staff and stakeholders what a developmental evaluation is.

Lesson Learned 3: However, beyond the incertitude, I’m truly enjoying the experience:

- Big change: it’s relieving that evaluative judgements about performance are not the main focus of the process, which removes the pressure off the organizations’ staff.

- The approach is promising and it raises enthusiasm in the organization (even if that hope becomes now the evaluation team’s pressure to help coming up with something new (and good)).

- Also, since the evaluation’s deliverables pivot (for example, from inception and final report to real-time working notes) we have more flexibility in these products as evaluation guidelines do not contemplate what they are supposed to include or look like.

We have just started the inception phase, so still there is still lots to experiment and learn in this journey, but I am very grateful to be having this next-level experience in my evaluation practice.

Final Note: This is my last Saturday post for a while (I’m starting new personal projects that keep me busy), but I want to thank AEA365 for the platform and the good discussions – and I will be back in individual contributors’ weeks at some point! Take care 🙂

Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org. aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

Hi Sara,

I am new to the field of program evaluation, but have been thoroughly enjoying learning about this dynamic profession through the course PME 802 – Program Inquiry and Evaluation as part of Queen’s University’s Professional Master of Education program.

I found your summary of “Developmental Evaluation” to be very engaging, relevant and helpful. I especially connected to your point regarding how “the expected result is the co-creation of a social innovation rather than a set of recommendations”. I work as a teacher in the public school system in British Columbia. I strongly agree that the most meaningful and useful work comes when ideas, thoughts, connections and questions are shared in a supportive and collaborative environment. One of the main themes I have found throughout my learning about program evaluation involves the need for an evaluation to be useful for the primary intended user(s). I think you have shown how the “Developmental Evaluation” approach offers a greater chance of this occurring as compared to traditional approaches.

I really appreciate your honesty about your feelings of uncertainty regarding using this new approach. I think we can all agree that pushing ourselves out of our comfort zones is uncomfortable; yet necessary in order for real growth to occur. I am honoured to live on the traditional territory of the Lil’wat Nation. Dr. Lorna Williams (Lil’wat) has published six Lil’wat Principles of Learning. I believe that the principle of Cwelelep applies to what any evaluator looking to transition from traditional evaluation methods to new approaches would experience. Cwelelep is the ability to recognize the need to sometimes be in a place of dissonance and uncertainty, so as to be open to new learning (Sanford, Williams, Hopper & McGregor, 2012). I think that reminding ourselves that these feelings of uncertainty are natural and necessary for new learning and innovation to occur is reassuring.

Thanks again for an excellent blog post!

Cheers,

Tricia Mitchell

Reference:

Sanford, K., Williams, L., Hopper, T., & McGregor, C. (2012). Indigenous Principles Decolonizing Teacher Education: What We Have Learned. Education 3-13, 18.

Hi Sara, thank you for this interesting post as always.

On the 3 pillars that you miss from the traditional evaluative approach, I think there is no need to get out of that “comfort zone” as you call it, on the contrary, I would turn it into a challenge, for example:

1) keep the evaluation questions, build your own questions on which you want to work as a facilitator to come up with the innovation product together with the team.

2) a clear timeline is always possible

3) Starting the process by collectively building your own definition of developmental evaluation can also be an interesting challenge!

I’m going to miss you a lot around here, I really enjoy your “out of the box” thoughts!

Good luck with your new assignment, keep us posted on the results.