Hello from Andres Lazaro Lopez and Mari Kemis from the Research Institute for Studies in Education at Iowa State University. As STEM education becomes more of a national priority, state governments and education professionals are increasingly collaborating with nonprofits and businesses to implement statewide STEM initiatives. Supported by National Science Foundation funding, we have been tasked to conduct a process evaluation of the Iowa statewide STEM initiative in order to both assess Iowa’s initiative and create a logic model that will help inform other states on model STEM evaluation.

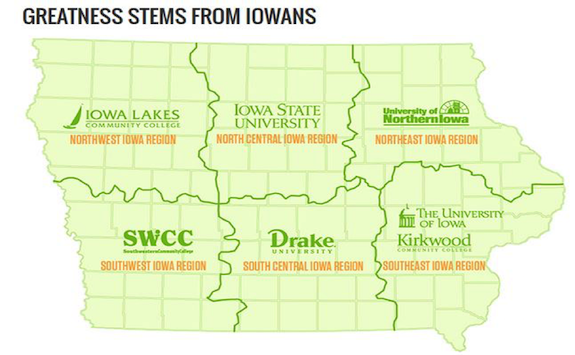

While social network analysis (SNA) has become commonly used to examine STEM challenges and strategies for advancement (particularly for women faculty, racial minorities, young girls, and STEM teacher turnover), to our knowledge we are the first to use SNA specifically to understand a statewide STEM initiative’s collaboration, growth, potential, and bias. Our evaluation focuses specifically on the states’ six regional STEM networks, their growth and density over the initiatives’ years (‘07-’15), and the professional affiliations of its collaborators. How we translated that into actionable decision points for key stakeholders is the focus of this blog.

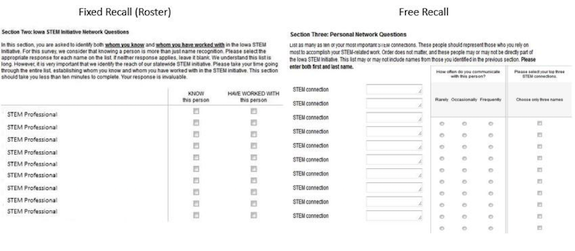

Lessons Learned: With interest in both the boundaries of the statewide network and ego networks of key STEM players, we decided to use both free and fixed recall approaches. Using data from an extensive document analysis, we identified 391 STEM professionals for our roster approach. We asked respondents to categorize this list by people they knew and worked with. Next, the free recall section allowed respondents to list professionals they rely on most to accomplish their STEM work and their level of weekly communication – generating 483 additional names not identified with the roster approach. Both strategies allowed us to measure the potential and actual collaboration along the lines of the well-known network of STEM professionals (roster) and individual’s local networks (free recall).

Lessons Learned: The data offered compelling information for both regional and statewide use. Centrality measurements helped identify regional players that had important network positions but were underutilized. Network diameter and clique score measurements informed the executive council of overall network health and specific areas that require initiative resources.

Lessons Learned: Most importantly, the SNA data allowed the initiative to see beyond the usual go-to stakeholders. With a variety of SNA measurements and our three variables, we have been successful in identifying a diverse list of stakeholders while offering suggestions of how to trim down the networks’ size without creating single points of fracture. SNA has been an invaluable tool to classify formally and evaluate the logistics of key STEM players. We recommend other STEM initiatives interested in using SNA to begin identifying a roster of collaborators early in the development of their initiative.

The American Evaluation Association is celebrating Social Network Analysis Week with our colleagues in the Social Network Analysis Topical Interest Group. The contributions all this week to aea365 come from our SNA TIG members. Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org. aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

This looks like a great study. Can you share your survey questions with us? The graphic is too small to read the actual language. Thanks!