Hi! We are Shelly Engelman, Kristin Patterson, Brandon Campitelli, and Keely Finkelstein of the Texas Institute for Discovery Education in Science (TIDES) at the University of Texas, Austin. The mission of TIDES is to promote, support, and assess innovative, evidence-based undergraduate science education. A large part of our work entails working with STEM faculty to evaluate the efficacy and impact of education programs on students.

At the beginning of every project, our tendency as evaluators is to generate a logic model to visually represent how a program is intended to work and bring about change. Recently, however, faculty and staff’s reactions to logic models forced us to change directions and create a new, more useful and palatable format.

Here are a few quotes from faculty highlighting some of the impediments to using logic models with STEM faculty:

This [logic model] is really hard to digest and full of jargon. What’s the difference between an output and an outcome?

Wow…this is over my head. I’d like to see this summarized in a table format.

Cool…but, I don’t know how useful this will be. It would helpful if I saw a timeline with clearly delineated roles and responsibilities.

How does this logic model relate to the evaluation plan? I’d like to see the logic model and evaluation plan on one page…in one figure.

Where are my program’s goals in this model? Can we emphasize them more?

Hot Tips:

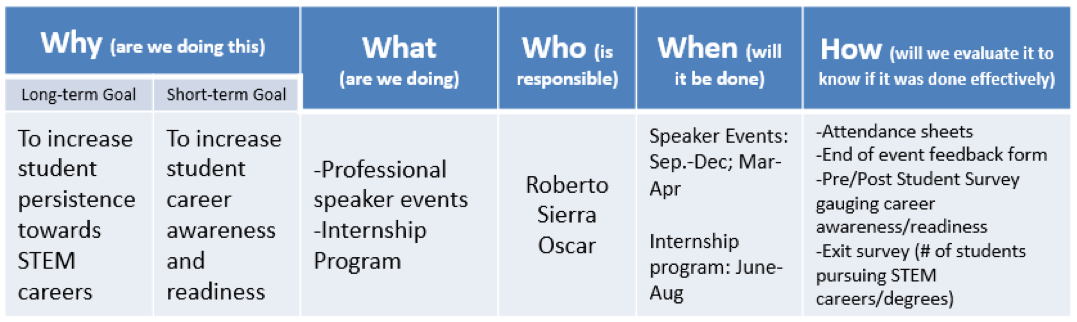

Using a ‘who, what, when, why, and how’ approach, we re-designed our logic model to use terms, concepts, and a format that is more familiar to STEM faculty and staff. This approach not only captures the program essentials, but also assigns roles and responsibilities to staff members, establishes a timeline of events, integrates the evaluation component, and emphasizes the program’s goals. Instead of nebulous concepts framing the logic model (e.g., outputs), our re-designed approach is organized by the following questions; note how they are aligned to components of a logic model/evaluation plan:

Questions aligned to…

Why (are we doing this?) Impact/Outcomes

What (are we doing?) Activities

Who (is responsible?) Inputs

When (will it be done?) Timeline

How (will we evaluate Evaluation methodology

it to know if it was done effectively?)

Cool Tricks: Use a table format to rearticulate a classic logic model

Guided by these questions, it is fairly straightforward to rearticulate a logic model into a table format. We found that non-evaluators gravitate to a table because they can clearly see the alignment between their program’s goals, activities, timeline, and the evaluation methodology. Here is an example below:

Contribute Your Own Best Practices

We appreciate that the evaluation community has more to learn about effectively communicating logic models to non-evaluators. Previous AEA 365 blog posts by Corey Smith and Matt Keene suggest that there is a need to explore alternative approaches to logic models. We invite you to share your best practices. For those interested, we could put together a panel presentation at a future AEA conference!

Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org. aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

Pingback: Module 2: Part C – AEA365 Forum – Professional Master of Education Journey

Thank you for the interesting post! I am a master’s student currently taking a Program Inquiry and Evaluation course. I just completed a logic module template for an assignment and found this post was quite relevant and interesting for me. As a student, all of the exercises are theoretical and sometimes the real life application can be difficult to appreciate. I believe this is an excellent example of adjusting the material for the appropriate audience. As the staff and faculty are the ones implementing the program, it would be essential that they understand the logic model.

The comment that you received about jargon really made me think of the practicality of sharing these logic models and program plans with stakeholders if they aren’t familiar with the terminology. Should evaluators be considering models similar to your example with simplified layman terms to share with stakeholders? Or perhaps the simplified version should be made for the implementers of the program? I really liked the example that you provided but I would be interested in the original “hard to understand” version to see a comparison. Was the original that complicated? As I am coming to learn, evaluators really have a challenging role that is a dynamic challenge requiring adaptation to each different program and stakeholder/implementing group.

Thanks for the interesting post,

Crystal

Hi Shelly, Kristin, Brandon and Keely,

I am a graduate student in the Professional Masters of Education program through Queens University. Our assignment has led us to find an article on AEA365 and respond to the author(s). I came upon your article as my current interest involves STEM activities on Fridays with my grade 6 students. I am even more excited to say that the logical model you have you used for STEM applies directly to the how I can evaluate my students in this subject area. I appreciate how you have all aligned the “who, what, where, when, how approach” with the logical model/evaluation plan.

As an extension to this idea you have all presented, I can see myself using this format for school goal setting with staff. I am also an elementary vice-principal and I see this format as an excellent opportunity to scaffold goal setting, project based learning, or meeting curricular and core competency learning objectives in the classroom with students.

Thanks,

Ashley Pagan

thanks – very handy!

This is great! I’m keeping this resource handy for helping people understand logic models. Any tips for the outputs versus outcomes comment? I feel like that’s one that comes up a lot and I haven’t figured out the secret to explaining it.

Hi Kendra,

Thank you for your feedback! When explaining the difference between outputs and outcomes, I typically ask clients to think of outputs as the ‘quality and quantity’ of the activities. (Were activities provided? How often/frequently were they provided? Were participants satisfied with the activities? Did they meet their needs?) In terms of outcomes, I usually tell clients to think of the changes in knowledge, skills, or attitudes that they hope to see in participants. I hope this helps!

This is excellent. I often begin with the “end in mind” with my clients and working from “right to left”. But, the way you have “flipped” the Logic Model to show the goals first (on the left side) total makes sense and is much more “brain compatible”than working from right to left. I am going to adopt your model! Thank you for sharing!

Sondra