Hello, my name is Michel Laurendeau, and I am a consultant wishing to share over 40 years of experience in policy development, performance measurement and evaluation of public programs. This is the fifth of seven (7) consecutive AEA365 posts discussing a stepwise approach to integrating performance measurement and evaluation strategies in order to more effectively support results-based management (RBM). In all post discussions, ‘program’ is meant to comprise government policies as well as broader initiatives involving multiple organizations.

This post discusses how the relation of performance measurement to results-based management should be articulated and incorporated into logic models.

Step 5 – Including the Management Cycle

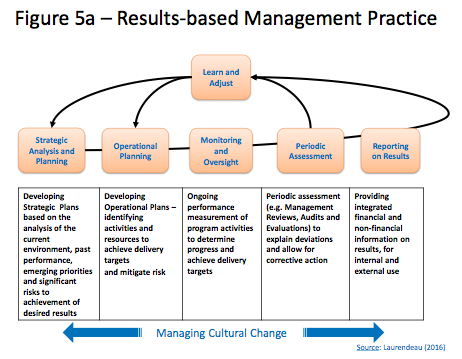

Some logic models try to include management as a program activity leading to corporate results (e.g., ‘financial/operational sustainability’ and ‘protection of organization’) that are presented as program outcomes. Indeed, good management can help improve program delivery and thus contribute to program performance. However, that contribution is indirect and normally achieved through the ongoing oversight and control of program delivery (and the occasional revision of program design) with requisite adjustments to operational or strategic plans being informed by the ongoing measurement (or monitoring) and the periodic assessments of program performance (see Figure 5a).

Results-based management (RBM) then depends on the identification of relevant indicators and the availability of valid and reliable data to correctly inform players/stakeholders and adequately support management reporting and decision-making processes. The quality and use of performance measurement systems for governance is actually one of many elements of Management Accountability Frameworks (MAF) in the Canadian Federal Government, with other elements covering expectations regarding stewardship, policy and program development, risk management, citizen-focused service, accountability and people management. However, MAFs are the object of development and an assessment process that is totally separate from the one used for Performance Measurement Frameworks (PMF) based on delivery process models and/or logic models.

Indeed, the management cycle is relatively independent from actual program operations, with management standing in a relation of authority above program staff to provide oversight and control at each step of the delivery process (see Figure 4b in yesterday’s AEA365 post).

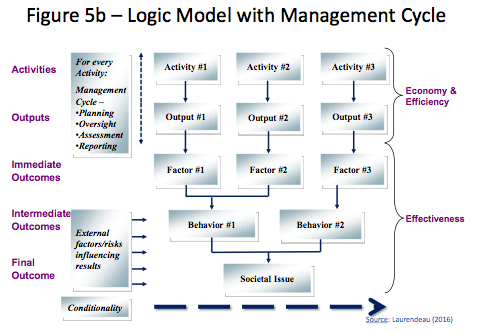

Trying to build the management cycle as a chain of results (or as a part of it) in a logic model is then totally inappropriate as it creates unnecessary confusion between management and program performance issues. Presenting the results of good management as program outcomes also blurs the distinction between efficiency (i.e., the internal capacity to deliver) and effectiveness (i.e., program impacts on target populations). Figure 5b below shows how to properly situate the management cycle in a logic model itself, essentially as an authoritative or facilitative process without direct causal links to specific program results.

This does not mean that management issues should be excluded from PMFs. Relevant indicators of management performance should also be identified for monitoring purposes whenever they are identified by management itself as internal factors or risks that do (or may) influence program delivery.

The next AEA365 post will discuss ways of addressing indicators and actual measures of performance.

Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org . aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

Thank you for this valuable contribution.

I just want to know if you’re conducting webinar sessions on this subject ?

I am looking forward to see the next steeps.