Hi! We are Nora Phelan and Lily Corrigan, researchers in the Evaluation & School Improvement Services division at Measurement Incorporated (MI). We partner with non-profit organizations, school districts, and government entities, supporting them to evaluate various programs and interventions, generally in educational settings. Today we are sharing some hot tips and lessons learned from our recent efforts to build evaluation capacity in a large school district using the principles of implementation science.

We were contracted to evaluate six school improvement initiatives operating across 38 low-performing schools. Funding for these programs was time-limited; they had all been operating for less than two years, and district administrators were eager for us to answer the ultimate evaluation question: What is the impact on students? We began by learning about each program, guided by the Appreciative Inquiry method. We found that all the programs were in the early stages of implementation and experiencing common growing pains, which included staff turnover, inconsistent data collection, lack of practical guidance from district leadership, and differing levels of support and adherence at each school.

When it came to our first round of evaluation reports, we knew that it was too early to look at outcomes for these interventions. We saw an opportunity to facilitate a conversation in the district about the importance of implementation fidelity and encourage leaders to shift the way they approached their assessment of new interventions. We asked them to “zoom out” and look at the key components of program implementation, before “zooming in” to examine the effect on students. If leaders zoomed in too quickly, a program could easily be labeled unsuccessful and therefore dismissed, when in fact, it was never given the resources and opportunities to fully operate as intended.

Hot Tip

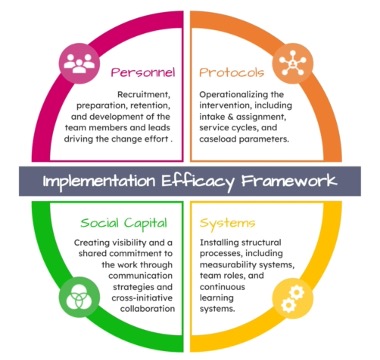

To encourage this shift, we identified four pillars based on the core capacities of implementation—Personnel, Protocols, Systems, and Social Capital. We constructed our evaluation reports for each program within this template, and used color coding and consistent icons as visual cues. This allowed district leaders to become familiar with these pillars, and enabled us to acknowledge where strengths and gaps lay in each program while highlighting themes across programs. Our reports were well received, and we have seen early indications of steps being taken in the district in response to the information we presented, in order to strengthen programs and establish continuous improvement cycles.

Lessons Learned

Relationships are key! We would not have been able to successfully communicate the importance of implementation without first establishing collaborative relationships with district and program leaders based on trust and shared goals. We met them where they were and demonstrated an appreciation for their need to learn about program effectiveness, before encouraging them to “zoom out” and focus their lens on capacity building, operations, and functionality. We had to build trust and credibility to open them to this perspective. Our approach as a genuine partner allowed district leaders to remain open and candid, even when receiving information that called for changes in the district.

Rad Resource

For more on Implementation Science, visit the National Implementation Research Network, and the Active Implementation Hub.

The American Evaluation Association is hosting Organizational Learning and Evaluation Capacity Building (OL-ECB) Topical Interest Group Week. The contributions all this week to AEA365 come from our OL-ECB TIG members. Do you have questions, concerns, kudos, or content to extend this AEA365 contribution? Please add them in the comments section for this post on the AEA365 webpage so that we may enrich our community of practice. Would you like to submit an AEA365 Tip? Please send a note of interest to AEA365@eval.org. AEA365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators. The views and opinions expressed on the AEA365 blog are solely those of the original authors and other contributors. These views and opinions do not necessarily represent those of the American Evaluation Association, and/or any/all contributors to this site.