My name is Monica Hargraves and I work with Cooperative Extension associations across New York State as part of an evaluation capacity building effort in the Cornell Office for Research on Evaluation (CORE). My work with Extension is shaped, in part, by insights we gained through a Concept Mapping research project we did in late 2008. We wanted to explore, from practitioners’ perspectives, what factors contribute to supporting evaluation practice in an organization.

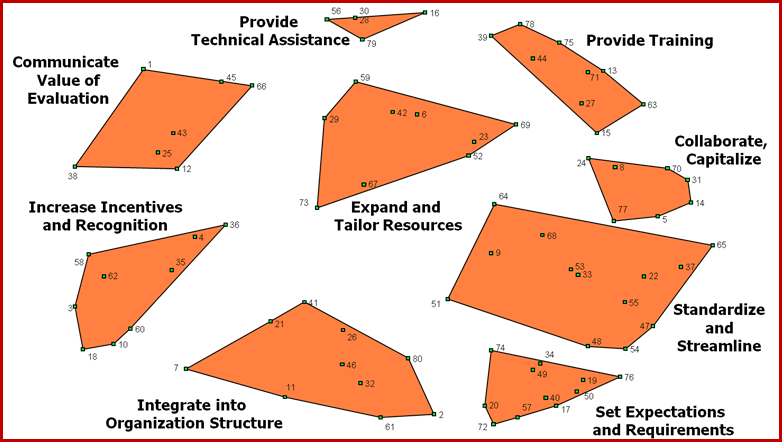

We used Concept Mapping software from Concept Systems, Inc. to gather ideas in response to this prompt: “One specific thing an Extension organization can do to support the practice of evaluation is …” Contributors included county-based educators and Executive Directors, as well as state-level Extension administrators and Cornell staff. The raw ideas were pared down to a working set of 80, and then participants sorted the ideas into clusters and rated them on two criteria: potential for making a difference, and relative difficulty.

The individual ideas become points on a “Cluster Map” that gives a visual representation of how participants conceptualized the patterns and themes in ideas (see below. For information on the Concept Systems technology and the statistical techniques that underlie it, see www.conceptsystems.com.) The ratings are useful for thinking strategically about what to do give priority to when trying to improve and sustain evaluation practice in organizations.

Rad Resource: For more detail on the study, including a handout with the individual idea statements and their ratings on potential difference, see http://core.human.cornell.edu/AEA_Conference.cfm#2008

Cluster Map of Ideas in Response to the Prompt: “One specific thing an Extension organization can do to support the practice of evaluation is …”

Lessons Learned:

- Technical assistance and training are not enough! The top-rated cluster in terms of potential for making a difference was “Communicate the Value of Evaluation.” The ideas there included educating organization leaders, staff, and volunteers on the importance of evaluation (not the how-to), using evaluation results well and demonstrating how they lead to better programming, having an evaluation champion in-house, making evaluation results easy to understand and user-friendly.

- Communication is important. Communication should be used to motivate evaluation and build organizational commitment to it, and as a practical tool for sharing what works, fostering collaborations, and saving time.

- Leadership and Structure matter. The second and third most important clusters were “Set Expectations and Requirements” and “Integrate into Organization Structure”. Respondents wanted clarity and consistency, and to have evaluation woven into a wide range of organization functions and practices.

This contribution is from the aea365 Daily Tips blog, by and for evaluators, from the American Evaluation Association. Please consider contributing – send a note of interest to aea365@eval.org.