Hi again! We are Aaron Gunning, Database Implementation Manager, and Laura Beals, Director of the Department of Evaluation and Learning, from Jewish Family and Children’s Services (JF&CS) a large multi-service nonprofit located near Boston, MA. In yesterday’s post, Laura and I introduced the idea of using electronic case records (ECR) as an evaluation tool. The process of transitioning to ECRs has, however, sometimes been challenging for us and our staff, resulting in many lessons learned!

Lessons Learned:

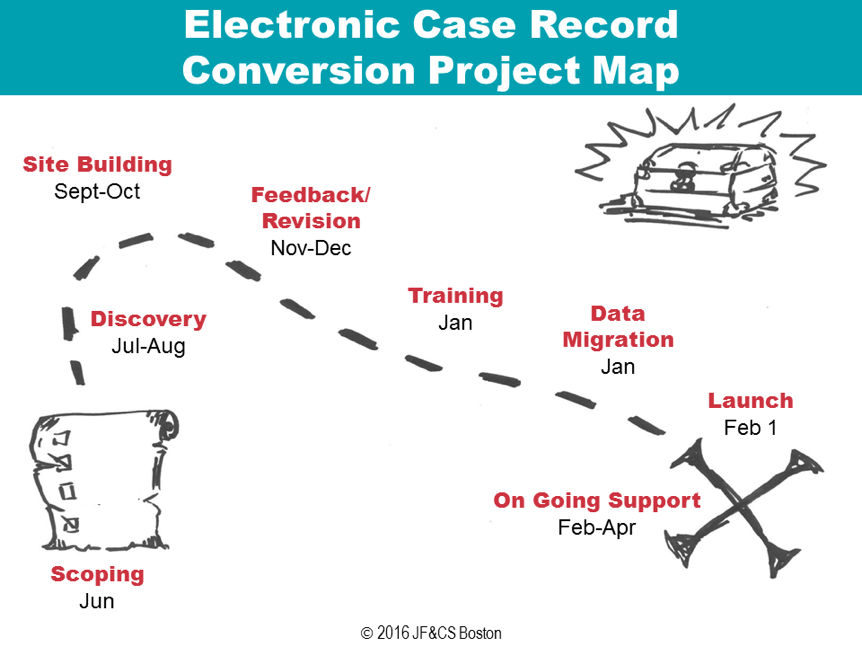

- Set expectations about the process so that everyone involved knows what to expect and when. Converting to an ECR can take many months, especially for complex programs starting from an entirely paper-based system. At the first kick-off meeting of the project, we outline the steps involved, expected timelines, and associated expectations.

- Use process mapping to understand program workflow. For each program that is migrating to ECRs, we create a process map so that we can ensure the database matches the workflow of the direct service staff. For these process maps, we use a “swim lanes” layout, with rows representing the people involved, systems used, data needed, and reporting needed at each step in the program. (We learned about process mapping for evaluation at Eval 2015 and again in 2016 from Kara Crohn and Matthew Galport from EMI.) This ensures that we incorporate both the service delivery needs of staff and the reporting needs of the program. Recently, we have used LucidChart to generate the process map visuals, but they could also be created in PowerPoint, Word, or Excel.

- Create a “Pioneers” group to increase buy-in. To foster staff engagement in a recent conversion process, we recruited a group of “ECR Pioneers” who met bi-weekly to discuss questions related to the new ECR. This group helped to identify important functionalities such as de-identifying printable client data to take into the field and became enthusiastic champions of the new system.

- Schedule structured meetings for feedback throughout the planning and implementation. We found that it often took several tries to build an ECR which fully met each program’s client data needs. As a result, we now hold structured meetings to solicit feedback from staff before we plan, between building and training, and one to two months after launching a new ECR.

- Build in sufficient time for training pre-launch and follow-up support post-launch. Many people learn best by doing and so to ensure that staff feel prepared to use a new ECR, we provide extensive practice entering and retrieving fake client information prior to launching a new ECR. We also provide detailed training manuals that can be referenced later. After we launch, we embed an evaluation staff member in the program’s offices for up to a full month to support staff as they begin to use a new ECR with real clients.

Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org . aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.