My name is Awab and I am working as Monitoring & Evaluation Specialist for Tertiary Education Support Project (TESP), at the Higher Education Commission (HEC), Islamabad, Pakistan.

In my experience, the most challenging task in any evaluation is to sell the findings and recommendations to the decision makers and make the evaluation usable. Many evaluations stay on the shelf and do not go beyond the covers of the report as their findings are not owned and used by the management and implementation team.

After conducting the Level-1 &2 Evaluation (shared here earlier https://goo.gl/gyit55), recently we conducted the Level-3 evaluation of the TESP training programs (please find the full report on https://goo.gl/AELJtU). The overall purpose of the evaluation was to know if the learning from training had transformed into improved performance at work place. Also, we wanted to document the lessons learnt from the training and incorporate them in improving strategies for the future training programs.

Cool Tricks:

In order to ensure that when we conduct the Level-3 Evaluation of the training program of TESP, its findings and recommendations are used, we adopted the following strategies:

- Drafted the scope of work for Level-3 Evaluation and shared it with the top management and the implementation team. As a result they clearly knew the purpose and importance of the Level-3 Evaluation in measuring the effects of training on performance of its participants.

- Engaged the implementation team in the processes of drafting the survey questionnaire and finalizing it. As a result, they curiously waited for the evaluation results so that they could learn how well their training program had performed in improving the performance.

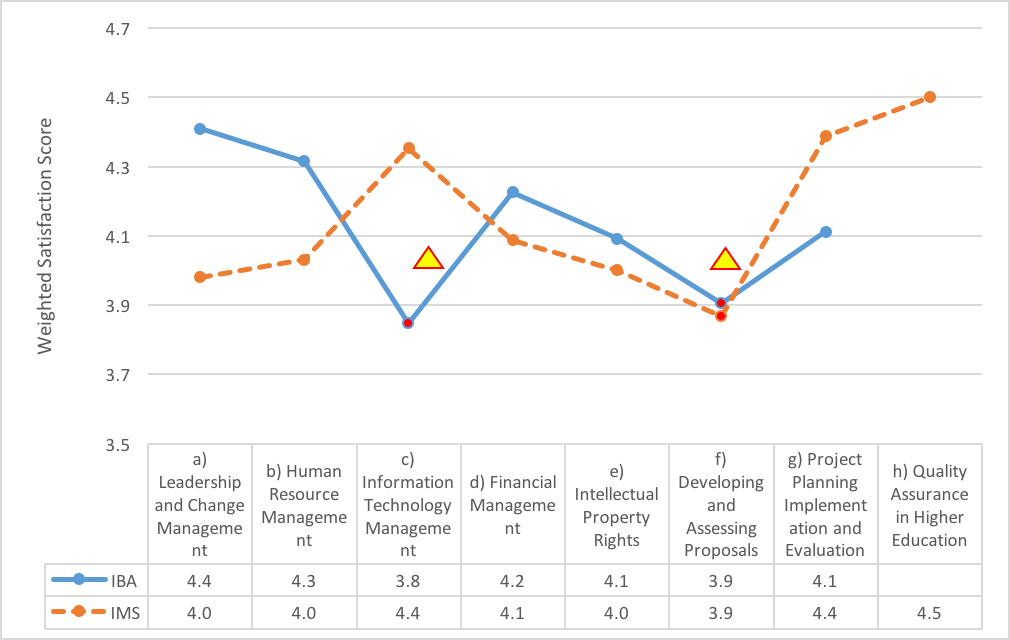

- Presented the results overall to make them easy to understand. Then we disaggregated the information and explained the results ‘training theme-wise’ and ‘implementation partner (IP)-wise.’ So, the implementation team knows the problem areas very precisely, avoiding over-generalizations.

- Used data visualization techniques and presented the information in the forms of attractive graphs with appropriate highlights, as shown in the following figure. This made the findings easy to understand.

- Adopted a sandwich approach in presenting the findings. Highlighted the achievements of the training program, before we went to point out the gaps. And closed the presentation with a note of appreciation for the implementation team. This helped the implementation team in swallowing the not-so-good feedback.

All the above tricks helped the management in acknowledging the findings of the evaluation and adopting its recommendations. Interestingly, at the end of our final presentation, the Leader of the training implementation team was the one to lead the applause.

Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org . aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

Hello Awab,

I thoroughly enjoyed reading your article as it highlighted some very salient points about evaluation use. I am currently enrolled in a course on Program Evaluation and the information you provided was extremely valuable in furthering my understanding of the difficulties that can be encountered in the process.

I definitely concur that one of the biggest problems that we affront is that “evaluations stay on the shelf and do not go beyond the covers of the report as their findings are not owned and used by the management and implementation team.” As a teacher, I see this occurring all the time. The Ministry of Education invests a lot of time and effort into preparing documents for educators but quite frequently, these resources are never utilized and simply collect dust. Patton (2013) indicates that “there is compliance is just to get things done without real attention to use.” This is unfortunately the scenario in many organizations but the issue can be effectively addressed by using some of the tricks that you recommended in your blog.

What resonated for me the most was the suggestion to use a sandwich approach where the positive aspects of the program were immediately addressed rather than solely delving into the problems that existed. The idea to display appreciation to others was quite noteworthy as I can see this having a favourable impact on employees. Given that people always complain about a lack of time to read the materials or findings from the evaluation, I agree that a presentation using visuals would be quite effective. It is certainly more appealing and clearly provides a better understanding for everyone. Sometimes the jargon used in texts can be quite complicated for some and this could ultimately deter them.

One of the main goals of the evaluation is to identify issues and find meaningful ways to deal with them so as to bring about change. The tips that you have indicated will certainly help to alleviate some of the present concerns that we all face with regards to evaluation use. Thank you for sharing.

Regards,

Saadia Isahac

An insightful piece