Hello, we’re Goldie MacDonald, Associate Director for Evaluation in the Center for Surveillance, Epidemiology, and Laboratory Services at the Centers for Disease Control and Prevention and Jeff Engel, Executive Director of the Council of State and Territorial Epidemiologists. We co-chair the Digital Bridge Evaluation Committee comprised of professionals from state and local health departments, federal and non-governmental organizations, and the private sector.

Better a diamond with a flaw than a pebble without.

—Proverb

In many organizations, it’s easier to access evaluation reports than evaluation plans, especially as personnel or priorities change. Evaluation plans are usually shared with primary stakeholders, but not always disseminated widely. For example, some plans are not available because the content is sensitive or not user-friendly. Nonetheless, sharing an evaluation plan widely can contribute to transparency and richer discussions about evaluation quality earlier in the evaluation process.

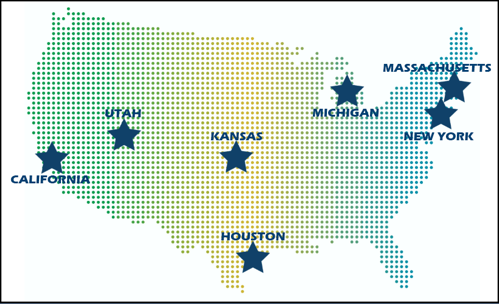

One example of sharing an evaluation plan well-beyond primary stakeholders is the Digital Bridge (DB) multisite evaluation led by the Public Health Informatics Institute. DB convenes decision makers in health care, public health, and health information technology to address shared information exchange challenges. DB stakeholders developed a multi-jurisdictional approach to electronic case reporting (eCR) in demonstration sites nationwide. These sites aim to automate transmission of case reports from electronic health records to public health agencies. eCR can result in earlier detection of health-related conditions or events of public concern, more timely intervention, and lowered disease transmission.

The DB Evaluation Committee led development of an evaluation plan for these sites and referenced the Program Evaluation Standards throughout the planning process. Because eCR activities continue to develop and evolve nationwide, we are currently interested in Standard E1 Evaluation Documentation in the Evaluation Accountability domain. E1 calls for documentation of evaluation purposes, designs, procedures, data, and outcomes. We realized that the evaluation plan is crucial to this documentation and sharing it widely contributes to dialogue and transparency in meaningful ways. For example, anyone can access the evaluation plan to support current or future eCR activities. Stakeholders and others can consider the quality of the evaluation in more detail as implementation continues. And, posting the plan on a public-facing website paves the way to sharing additional artifacts of the evaluation widely.

Lessons Learned: The author of the proverb above prefers a gemstone with a flaw to a small rock without one. Stakeholders in this evaluation likely agree, but they surely want to understand any flaws in the evaluation plan. We need to examine an evaluation to determine its merit or worth—Dan Stufflebeam called this the metaevaluation imperative. An evaluation plan is a key artifact of an evaluation that can be examined even as the evaluation unfolds. For example, stakeholders in this evaluation continue to provide crucial information about practicalities of data collection not addressed in the plan—flaws revealed when discussing the plan prior to implementation. In our case, stakeholders chose the diamond, but transparency and documentation help us to see and understand any flaws on the path to a meaningful evaluation.

Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org. aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

Hello Goldie MacDonald and Jeff Engel,

I enjoyed reading about how the Digital Bridge (DB) multisite evaluation was able to share an evaluation plan via their website.

I am intrigued by this article for a few reasons. I agree, and likely many do, with what you’ve said about sharing an evaluation plan beyond just the primary stakeholders, in that it “can contribute to transparency and richer discussions about evaluation quality earlier in the evaluation process.” It seems quite straight forward: the more transparent the evaluative process is, the more collaborative it is, resulting in an evaluation that’s more reflective of the needs of the stakeholders. And so I’m wondering, why don’t, or can’t, other evaluative organizations follow suit with this? Additionally, how have you been able to get around the limits that other organizations have like not sharing private information or that the content is not user-friendly?

Sharing the evaluation plan widely likely helps with creating a trusting, collaborative team between evaluators and program stakeholders as well. Perhaps, resulting in a higher rate of evaluation use, as you’ve involved potential users, like the public, “in defining the study and helping to interpret results, and through reporting results to them regularly while the study is in progress” (Weiss, 1998). I’m wondering if you’ve found this to be the case? Have users found it helpful to access to evaluation plans? Do users read and contribute to the evaluation plan? Is so, how many? And finally, what have been the pitfalls in sharing evaluation plans and reports while evaluations are underway?

Thanks for sharing your success story. I do hope to hear back from you in order to learn more about the process that you’ve followed.