Hello! We are Emily Moore, Meredith Pinto, and Sam Cruise. We are evaluators supporting the Centers for Disease Control and Prevention (CDC), Division of Global Health Protection (DGHP) Monitoring and Evaluation Team, led by Anja Minnick. Emily and Meredith are Public Health Analysts from RTI International, and Sam is a CDC Evaluation Fellow. We work on global health security programs.

CDC works to “protect America from health, safety, and security threats both foreign and in the U.S.” Within CDC, DGHP supports the agency’s mission by working with countries and implementing partners to help build public health capacities, advance public health research, and respond in times of crisis to public health emergencies. DGHP’s capacity-building efforts prepare countries for emergencies by creating and strengthening global health laboratory and surveillance systems and training a ready workforce of public health professionals capable of deployment during any emergency.

Our previous data collection efforts monitored and evaluated countries’ overall capacity to respond to outbreaks. Our data did not reflect DGHP’s direct contributions to emergency responses, nor did it include a comprehensive picture of the programs the division supports or the baselines from which progress is measured. To address this, we initiated standardized emergency response indicators the division could use to measure how we support global emergency responses.

Our journey to develop indicators measuring DGHP’s contributions to public health emergency responses began with a formative evaluation. Working with a steering committee of subject matter experts, we conducted a division-wide survey, document reviews, and in-depth staff interviews. Through these activities, we sought to capture how the division supports emergency responses. We then held a 3-hour virtual workshop with DGHP leaders to present evaluation findings. In the workshop, we used participatory activities to develop priority evaluation questions to inform indicator development. We used the results from the workshop and evaluation to create a logic model reflecting DGHP’s vision for continued support in responding to public health emergencies. We are currently approving the logic model with leaders and evaluation participants. Once approved, we will use the logic model and findings to design relevant indicators and evaluation questions that effectively measure the division’s contributions during public health emergencies. In the future, this data can be used to improve our global health security programs.

Hot Tips

Utilize a steering committee – We engaged a committee of division-wide emergency response experts, including both Headquarters and field staff, to provide input and feedback on everything from our evaluation design and purpose to results validation. This engagement ensured that our evaluation accurately documented DGHP’s emergency response support.

Validate preliminary findings – At our evaluation midpoint, we presented our preliminary findings to the steering committee and division leaders. This presentation allowed us to identify gaps in results and inform further interviews needed to capture the full breadth of DGHP’s support.

Cool Tricks

Prep workshop participants – We created and disseminated a Participant Guide before our virtual workshop that included the schedule, overview of evaluation results, and an introduction to the various workshop activities.

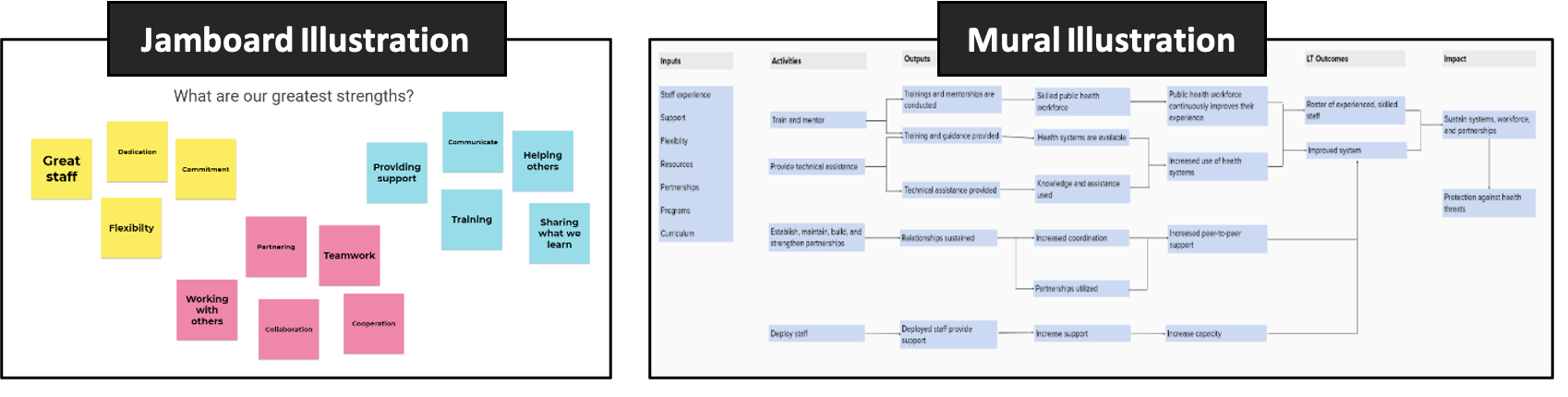

Use interactive platforms – Virtual platforms allow multiple users to work together in a virtual space simultaneously. We used Jamboard™ for the virtual world café during our workshop and Mural™ for logic model development, but there are lots of good options out there! Use of trade names and commercial sources is for identification only and does not imply endorsement by the CDC or the U.S. Department of Health and Human Services.

The American Evaluation Association is hosting Disaster and Emergency Management Evaluation (DEME) Topical Interest Group (TIG) Week. The contributions all this week to AEA365 come from our DEME TIG members. Do you have questions, concerns, kudos, or content to extend this AEA365 contribution? Please add them in the comments section for this post on the AEA365 webpage so that we may enrich our community of practice. Would you like to submit an AEA365 Tip? Please send a note of interest to AEA365@eval.org. AEA365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators. The views and opinions expressed on the AEA365 blog are solely those of the original authors and other contributors. These views and opinions do not necessarily represent those of the American Evaluation Association, and/or any/all contributors to this site.