We are Grisel M. Robles-Schrader and Keith A. Herzog of the Northwestern University Clinical and Translational Sciences (NUCATS) Institute. The Center for Community Health (CCH), which is one of 10 centers and programs within NUCATS, is specifically charged with offering support and resources to catalyze and support meaningful community and academic engagement across the research spectrum with the aim of improving health and health equity. Community engagement centers across the nation-wide Clinical and Translation Sciences Award (CTSA) consortium offer a similar range of programs and services.

We facilitated efforts within our institution to develop an evaluation infrastructure to better understand, improve, promote, and evaluate the community engagement support and services that CCH offers to investigators. By engaging key stakeholders within CCH and NUCATS more broadly, we concentrated our efforts on metrics and data collection tools relevant to our team’s work.

As part of our comprehensive evaluation plan, the CCH developed community engagement metrics covering six domains aimed at measuring engagement support and outcomes beyond publications and funding:

- consultation services,

- capacity building & education,

- fiscal support,

- partnership development,

- institutional-level changes, and

- community-level changes

Rad Resources:

- REDCap – Research and Electronic Database Capture is a secure web application for building and managing online surveys and databases.

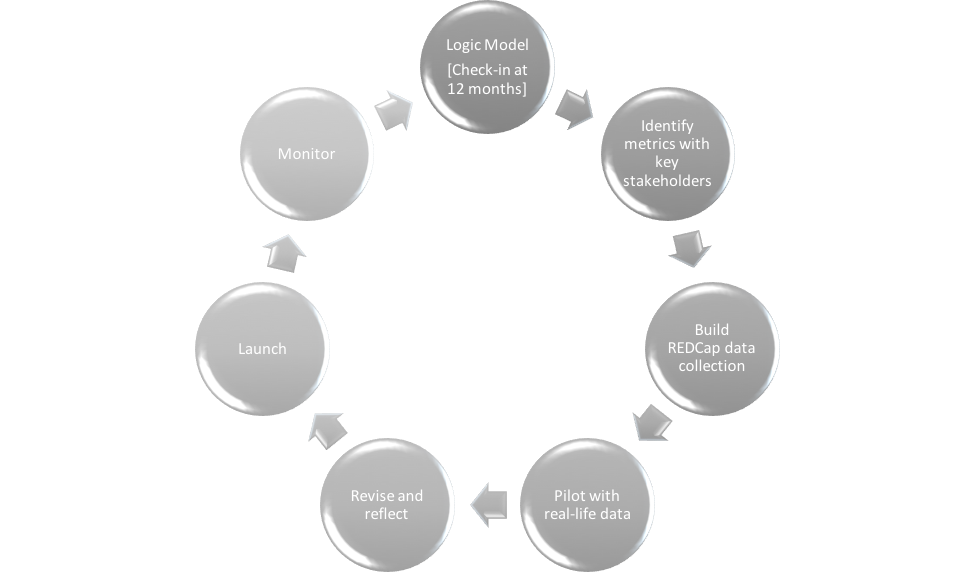

Phases of Development & Feedback Loops

Utilizing REDCap data collection tool enabled us to refine and adapt our “dream list” of metrics, based on our comprehensive logic model. In close collaboration with key internal stakeholders, we implemented the CCH Engagement and Tracking project focused on consultation services. At the end of the 12 month pilot period, we connected again with these stakeholders to assess what was working well, was not working well, and what needed to be revised. For example, we focused our review on categories that consistently had high rates of missing data and discussed whether those were still considered relevant questions to track. Moreover, we utilized the 12 month review as an opportunity to assess revisions to our logic model, based on evidence-based insights informed by the consultation tracking form.

Hot Tips:

- Engage key stakeholders throughout the evaluation development and implementation processes. This ensures you collect relevant data, utilizing strategies that are meaningful for your team.

- Utilize the 80/20 rule to avoid data collection creep (i.e., trying to collect everything, all the time). Ask yourselves: “What do we consistently encounter, do, collect, and share 80% of the time?”

- Pilot data collection tools using real-world data. Refine the tool. Revise and repeat (as necessary).

- Establish strong project management skills to keep the group on task and to secure buy-in from key stakeholders.

- Support standardization by developing manuals with succinct definitions and concrete examples. Including instructions and contextual links within REDCap so it is available when your team enters data.

The American Evaluation Association is celebrating Chicagoland Evaluation Association (CEA) Affiliate Week. The contributions all this week to aea365 come from CEA members. Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org. aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

I appreciate this material so. Is a very resource for support career aspirations and as an M&E and Programme Officer. I will be very grateful if a copy is made available for me through my email above.

Regards