My name is Ama Nyame-Mensah, and I am a doctoral student in the Social Welfare program at the University of Pennsylvania.

Likert scales are commonly used in program evaluation. However, despite their widespread popularity, Likert scales are often misused and poorly constructed, which can result in misleading evaluation outcomes. Consider the following tips when using or creating Likert scales:

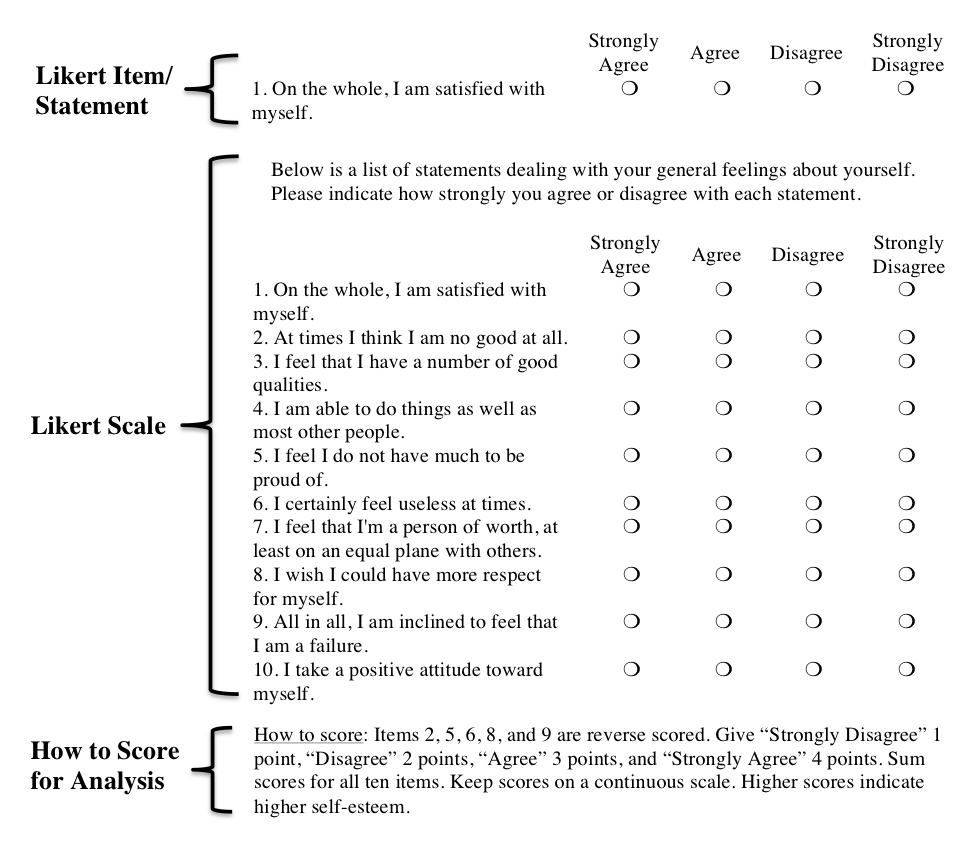

Hot Tip #1: Use the term correctly

A Likert scale consists of a series of statements that measure individual’s attitudes, beliefs, or perceptions about a topic. For each statement (or Likert item), respondents are asked to choose one option from a list of ordered response choices that best aligns with their view. Numeric values are assigned to each answer choice for the purpose of analysis (e.g., 1 = Strongly Disagree, 4 = Strongly Agree). Each respondent’s responses to the set of statements are then combined into a single composite score/variable.

Hot Tip #2: Label your scale appropriately

To avoid ambiguity, assign a “label” to each response option. Make sure to use ordered labels that are descriptive and meaningful to respondents.

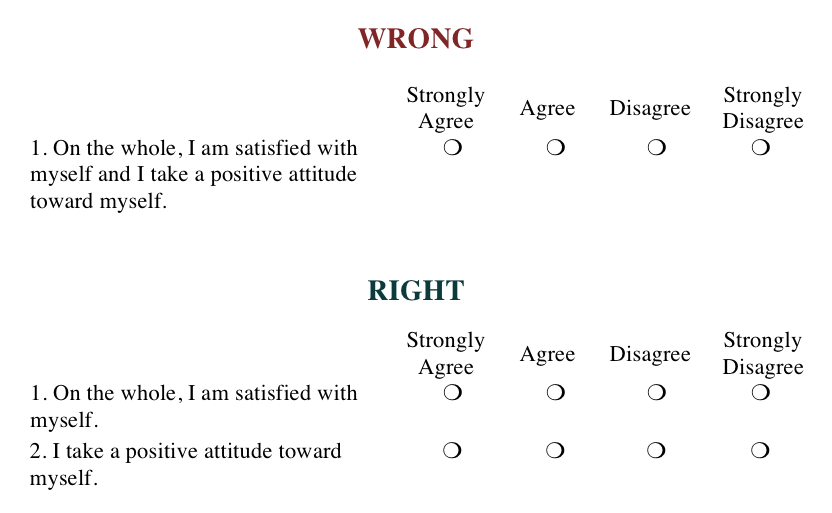

Hot Tip #3: One statement per item

Avoid including items that consist of multiple statements, but only allow for one answer. Such items can confuse respondents and introduce unnecessary error into your data. Look for the words “and” and “or” as a signal that an item may be double-barreled.

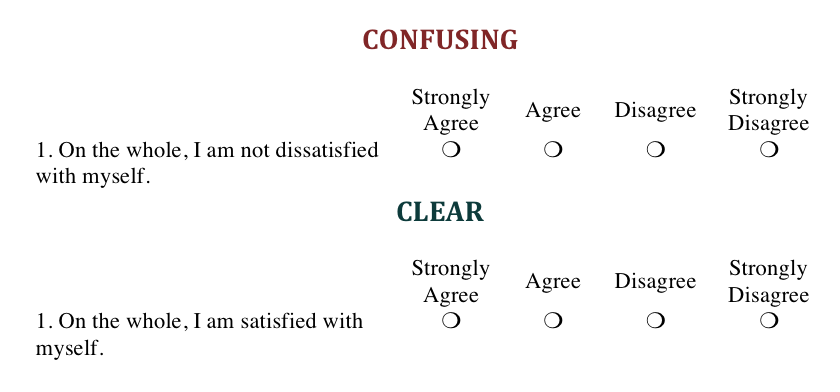

Hot Tip #4: Avoid multiple negatives

Rephrase negative statements into positive ones. Such statements are confusing and difficult to interpret.

Hot Tip #5: Keep it balanced

Regardless of whether you use an odd or even number of response choices, include an equal number of positive and negative options for respondents to choose from because an unbalanced scale can produce response bias.

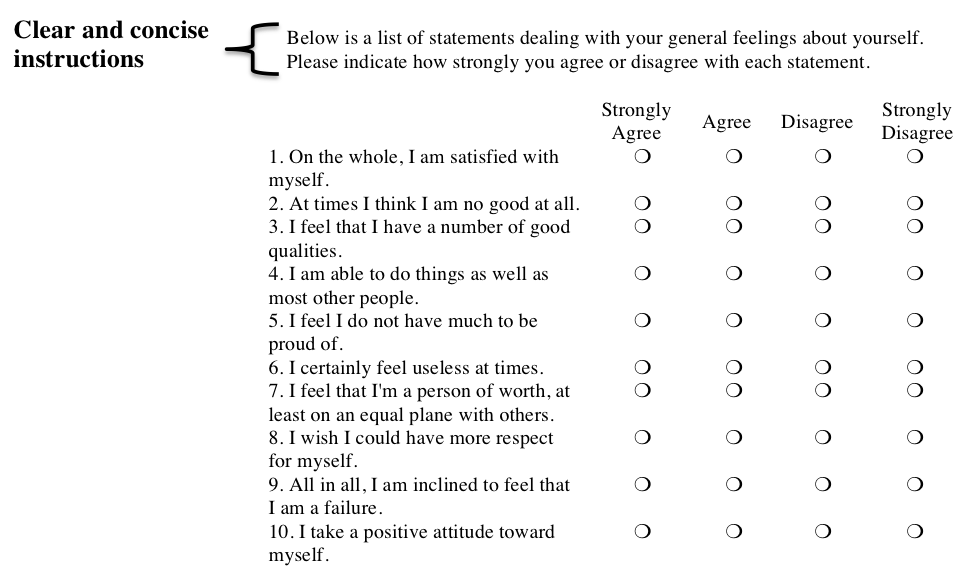

Hot Tip #6: Provide instructions

Tell respondents how you want them to answer the question. This will ensure that respondents understand and respond to the question as intended.

Hot Tip #7: Pre-test a new scale

If you create a Likert scale, pre-test it with a small group of coworkers or members of your target population. This can help you determine whether your items are clear, and your scale is reliable and valid.

The Likert scale and items used in this blog post are adopted from the Rosenberg Self-Esteem Scale.

Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org . aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

Ama Nyame-Mensah,

I really enjoyed your post about the Likert Scale. As an undergraduate student, I have not had much practice creating Likert Scales or interpreting them, but I have participated in many surveys and been subjected to the following types of statements. I agree with keeping one item per statement. It can be very confusing trying to understand what the statement is asking for if there are multiple items pertaining to it. I will keep these tips in mind when it comes time to design a scale for future research. Thank you for the great information.

Darcy

Hi Phil,

Thanks for your feedback. I believe your confusion may be the result of a common misconception about the following: traditional Likert scales vs. questions/items that have a Likert-style response option format.

The original Likert scale contained five response options: strongly approve, approve, undecided, disapprove, and strongly disapprove. However, recent research has found that having a neutral/middle option is not always needed, and, in fact, may skew your results.

While Likert-type scales and questions with Likert-like response options are very similar to the traditional Likert scale, there are some key defining features of traditional Likert scales:

1. They attempt to measure an underlying latent construct using a range of positive and negative statements;

2. They are measured using a continuum of response options representing one extreme at one end and the opposite extreme at the other;

3. The items that make up the scale should not be independent of each other, and should be derived from theory or past empirical investigation; and

4. The items in the scale can be summated or combined in some format to form a score.

I am sure there are features that are missing from the list above. For more information about the traditional Likert scale see:

Likert, R. (1932). A Technique for the Measurement of Attitudes. Archives of Psychology, 140, 1–55.

This topic hits on something that I am sure I learned in grad school 35 years ago and seems to have been forgotten. Or did my memory change? (See the recent PBS Nova episode on memory!)

I clearly remember being taught the difference between a “Likert scale” and a “Likert-type” scale. The latter is what is described here. What my professor told us was that Dr. Likert actually used what we today would call a focus group to come up with concrete terms to fit each point of his scale–often different sets for each question. So, he never had “Very Offended”, “Somewhat Offended”, etc., but instead would develop anchors more like: “Abhored”, “Dismayed”, “Miffed”, and so forth. All anchors were based on what the consensus of his focus group felt were good words to describe equal intervals of whatever concept was to be measured.

Never since then have I heard of anyone going to the trouble of developing such precise anchors using any kind of sample.

What do others remember? My quick search with Google found people using the terms “Likert Scale” and “Likert-type scale” interchangeably, with no mention of Dr. Likert’s original methods.

For a discussion of the more common issues and problems with Likert scaling please see: Bayley, S. 2001, ‘Measuring Customer Satisfaction’, Evaluation Journal of Australasia, vol. 1, no. 1, pp. 8-17.