Hi, we’re Kristy Moster and Jan Matulis. We’re evaluation specialists in the Education and Organizational Effectiveness Department at Cincinnati Children’s Hospital Medical Center.

Over the past year, our team has been engaged in the analysis of data from a three-year project with the Robert Wood Johnson Foundation focused on quality improvement training in healthcare. The data from the project includes information from surveys, interviews, knowledge assessments, observations of training, document analysis, and peer and instructor ratings of participants’ projects. Our task as a team was to pull all of the information together to create a clear, accurate, coherent story of the successes and challenges of quality improvement training at our institution. This work was also discussed as part of a roundtable at the AEA Conference in November 2011.

Hot Tip:

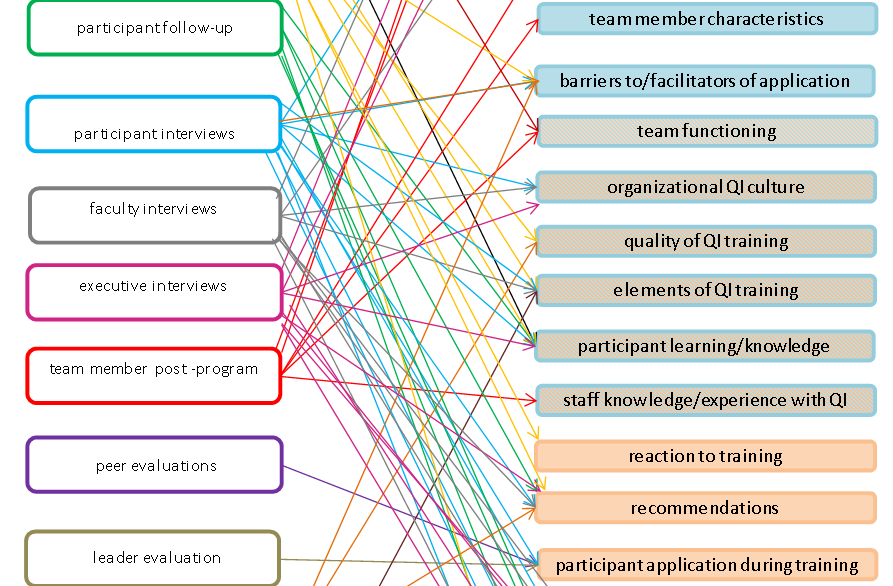

- Create a visual framework. Guided by an example found in transdisciplinary science, we created a visual framework to represent the extensive data and data sources from the project, including their interconnections. Starting with the logic model, we identified a set of themes being addressed by the evaluation, and then matched individual survey items, interview questions, etc. to the themes. From there we created a framework to show connections between the data sources and themes. This framework helped to create a shared understanding of the data for our research team, some of whom were fairly new to the project when the analysis began. It also provided structure to our thinking and our work. For example, the framework helped us to ensure that all themes were addressed by multiple data sources and also to determine which data sources to target first for different phases of our analysis (in our case, those sources that addressed the most themes of highest interest).

Lesson Learned:

- Consistency is crucial. By this we mean that the interconnectivity of all instruments and items needs to be well thought out. This is especially difficult in a multi-year evaluation by a research team with membership changing over time. As new instruments are created it is important to understand the connections to other instruments and the relevant themes to enable later comparison and combining of the data.

Resource:

The American Evaluation Association is celebrating Mixed Method Evaluation TIG Week. The contributions all week come from MME members. Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org. aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluator.

Re Create a visual framework

A good visual framework can be very useful, especially when dealing with more complex projects.

But I felt you could have done better than the diagram shown above. There are two related alternatives:

1. A matrix, showing the first column down left and second column across top and with individual cell values showing what row is event is connected to what column event

2. This data can then be imported into one of many different network visualisation software packages, which would make the linkages much more visible and comprehendible than above.Send me the data if you like and I will send back an example diagram. For more information on network visualisations see http://mande.co.uk/special-issues/network-models/