Hi. I’m Heather Krause, founder of Datassist. I’ve been doing data analysis around the globe for a long time and am an advocate for using ethics and equity as the foundations for all our work. My presentation on Feminist Data Analysis focused on step by step processes to avoid sexism, racism, homophobia and more in using data. Feminist data analysis requires us to examine the many assumptions embedded in our habitual data practices including where power dynamics are coming into play, what assumptions and values are being prioritized over others, and who is benefitting from all aspects of our choices around data and analysis.

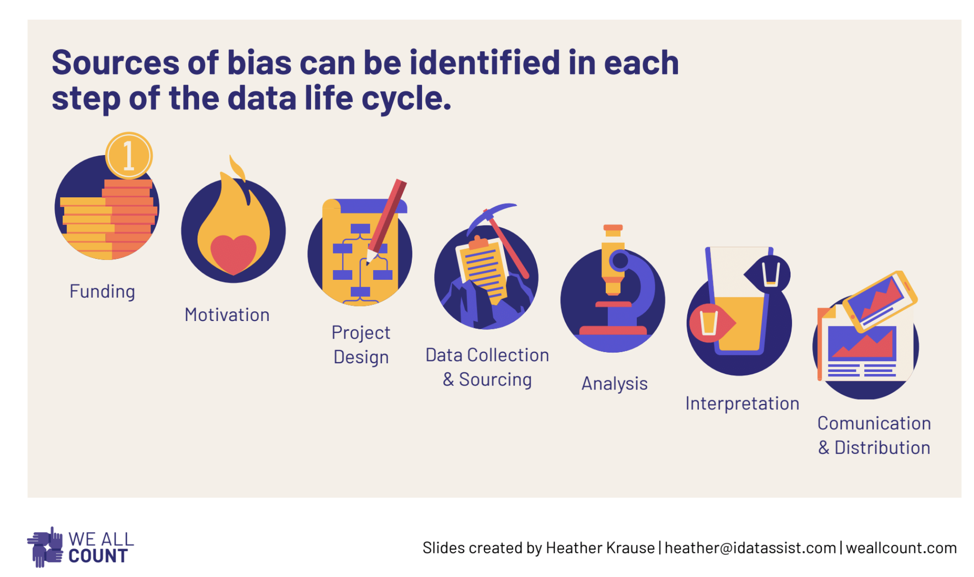

So often data products are thought of as objective, scientific ways of figuring out what’s really going on and what is working. However, data and statistics are never actually objective. I think about data projects in terms of the seven steps of the data life cycle. Every single step of data and evaluation is deeply embedded with the worldviews and hidden implicit biases of the people involved. Each step presents opportunities to increase equity, inclusion, and fairness.

Lessons Learned:

My talk walked us through the steps of the data life cycle and provided key questions and tools for identifying and correcting sexism, bias and more at each step. For example, in the Project Design step, constructing the methodology of any data project has many potential equity pitfalls. Probably the most prevalent bias here is towards comfort. What do the people involved know how to do? The amount of accidentally sexist or racist method choice is staggering, and often just due to limits of understanding, training, and level of comfort. The design of a data project is inherently subjective because it runs up against the limits of what the people running it think to measure. Big donors also tend to direct their funds towards what’s comfortable and often monolithic. You can almost forgive someone who always runs RCTs to try to answer all questions, but you simply can’t.

The Data Analysis step is often seen as the most objective and free from bias. In reality, there are a huge number of assumptions, interpretations, and conceptual biases that are an inextricable part of data analysis. A statistical impact evaluation model, for example, can easily be built to be technically correct and simultaneously biased against women, or other vulnerable groups.

Rad Resources:

Many participants in the event shared their own experiences, suggested tools and resources and stood in long lines after the event to ask questions. These conversations led to the development of We All Count, a project for equity in data. We’re sharing tools, tips, and stories on this site. We would love for you to add your story or comments to the community.

The full presentation, including the lists of bias-awareness questions to ask at each step, is available for anyone to see here.

There is also a draft of a really great new book about Feminist Data from Catherine D’Ignazio and Lauren Klein here.

The American Evaluation Association is celebrating Feminist Issues in Evaluation (FIE) TIG Week with our colleagues in the FIE Topical Interest Group. The contributions all this week to aea365 come from our FIE TIG members. Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org. aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

Thank you for your thoughtful insight towards data and analysis. I agree absolutely with you that data and statistics are never actually truly objective. Once we identify these hidden biases and not shy away from them I believe do we see the opportunities you speak of to involve equity, inclusion and fairness. In my department and work as a special education teacher, we are constantly looking for ways to increase awareness and open the conversation to speak to these ideas of equity, inclusion and fairness. As well as provide data surrounding these issues in meaningful lasting ways and not simply to check off a box or have a program be labelled as inclusive when it is more integration, or work towards equity in a classroom for a struggling student only to have questions equality rather than equity. I think the last step you speak of in terms of communication and distribution is a very powerful opportunity for these conversations to happen. For those teachers and students that do not understand or do not acknowledge their hidden biases, the process you speak of is where we have the opportunity to widen the conversation and hopefully open new horizons.

Thank you.

Thanks you so much for your comment. It’s great to hear from a teacher in your field. Some of my earliest work was on seeing how traditional evaluation metrics did not fit a children’s autism program at all. If you have any particular stories you’d like to share about your work, please reach out and we’ll share them with the entire community.

Heather

The title of your post caught my eye and I was quite engaged reading your perspective on data. I am currently doing my Masters and I’m taking a course on program inquiry and evaluation. It is quite difficult to disagree with the arguments you have put forth. Data is collected and analyzed by human beings and by virtue of this, it is prone to bias, injustice and subjective inquiry. I am reminded by a statement made by Shepard (2000) about the role of assessment in learning. Shepard (2000) stated that “all test can be corrupted. And all can have a corrupting influence on teaching” (p. 9). I believe the same argument could be made for evaluation or data (collection & analysis) to be specific.

Nonetheless, we do not dismiss assessments from the culture because it can be problematic. Likewise, I appreciate your efforts to intentionally disrupt the status quo by providing strategies and a space for discussion in order to increase equity, inclusion and fairness in the data life cycle. If data is supposed to be useful then it needs to serve all users and there is a level of accountability on the part of evaluators and stakeholders to ensure an equitable and inclusive process. If we are not intentional about our practices then we will continue to exclude, ignore, and devalue the perspectives of diverse groups, especially women. Providing key questions and tools for identifying bias, sexism and other injustices in the data life cycle is a step in the right direction.

Thank you for sharing and extending my understanding of the data life cycle and the need to be intentional in all aspects of program evaluation.

Reference:

Shepard, L. A. (2000). The role of assessment in a learning culture. Educational Researcher, 29(7), 4-14. doi: 10.3102/0013189X029007004

Hi Tasha. Thank you so much for your comment. And for making the connection between biased tests and biased evaluation. The reference is fantastic. I look forward to reading it.

Good morning,

I really enjoyed your post, as well as the presentation included in the RAD resources. As someone fairly new to the complex and multi-dimensional field of program evaluation, I found your post to uncover yet another area of awareness for evaluators and program designers. I specifically enjoyed the inclusion of bias-awareness questions to ask at each point in the process as it helped me to better understand what I could do to limit bias.

It was very interesting to read what you had to say about how “Every single step of data and evaluation is deeply embedded with the worldviews and hidden implicit biases of the people involved”. Prior to reading your post, this is not something I had adequately considered. I am one of those who viewed statistics and numbers to be, as you say, an objective and scientific way to figure out what is going on. Your presentation really opened my eyes to this and I will be implementing strategies identified as I embark on any program evaluation in the future.

Hi Allison. Thank you so much for your comment. I think it’s fairly common for many people to think of data/stats as objective – largely because that how they’ve been branded. I would love to hear more stories about what you discover as you work more in the area of program evaluation. Feel free to share your stories with me and I’ll pass them along to the We All Count channels and community. Heather