Hello from Katie Cary! I’m a senior consultant with EMI Consulting, an evaluation and consulting firm based in Seattle, Washington that focuses on energy efficiency and renewable energy programs and policies. Our firm predominantly works as evaluators and research partners for utility clients. More than ever, these utilities face an uncertain future for their energy efficiency programs, as increasing codes and standards, market transformation, and customer attitudes decrease the efficacy of their bread-and-butter approaches. In this post, I discuss how evaluators and consultants can help utilities navigate this new landscape by using developmental evaluation to stay agile, innovate, and adapt programs as they are being implemented.

But first, what is “developmental evaluation”?

Developmental evaluation is an evaluation approach coined by Michael Quinn Patton that supports the development of innovations occurring in dynamic, complex environments, where knowing “what to do to solve problems is uncertain and key stakeholders are in conflict about how to proceed”. Within the utility industry, evaluators often perform prescriptive, summative evaluations after programs have been running for a few years, with the goal of assessing whether it worked after the fact. Development evaluation, by contrast is about adapting the program as it is being run.

Comparison of Summative versus Developmental Evaluations

Hot Tip:

When faced with uncertain futures, incorporate these key elements of developmental evaluation into your evaluation design:

#1. Incorporate evaluation early. In an uncertain environment, you cannot afford to wait to see if your intervention is working. Build staged evaluations into the program design – it will pay dividends in avoided costs of ineffective programs.

Tip in Action: A program we evaluated was giving out free equipment to customers, and our evaluation found one that customers did not use. The program was able to remove this measure before it was sent to thousands of customers with no impact on customer satisfaction.

#2. Be flexible in your approaches. In some evaluations, approaches are designed far in advance of the work being completed. For developmental evaluations, evaluators must be able to adapt their approaches to changing program designs and stakeholder needs.

#3. Iterate and adapt. In evaluating an ever-changing program, evaluators need to evaluate frequently as changes are made, building on past findings and recommendations.

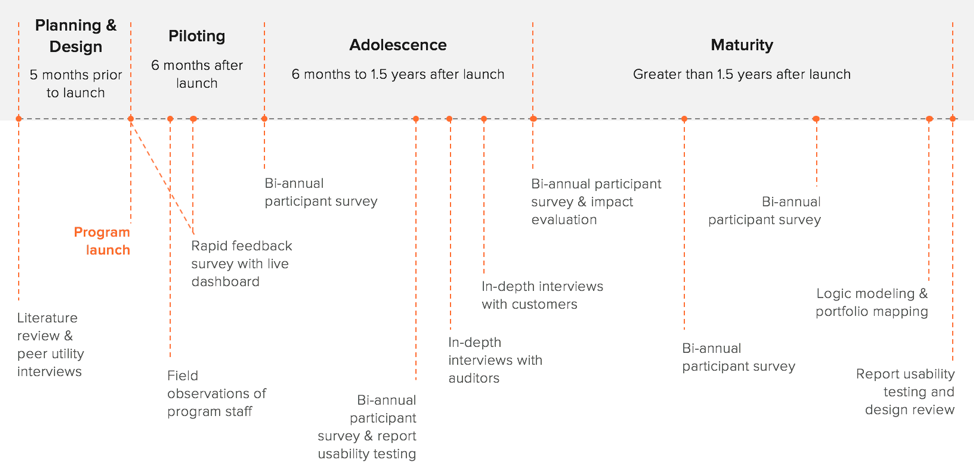

Tip in Action: This image demonstrates the frequency of our evaluation as the program developed.

#4. Don’t be a stranger. To be effective at developmental evaluation, evaluators cannot be kept at an arms-length from program implementation staff; instead, evaluators should be trusted members of the team, abreast of any program changes or shifts in the program’s goals.

Rad Resources:

Learn more about developmental evaluation at Better Evaluation and more about our project here.

The American Evaluation Association is celebrating Environmental Program Evaluation TIG Week with our colleagues in the Environmental Program Evaluation Topical Interest Group. The contributions all this week to aea365 come from our EPE TIG members. Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org. aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

Hi Katie

My name is Aaron Tran and I am a Professional Masters of Education student at Queen’s University in Canada. You may find it interesting that I have no formal background in education. I work as a Paramedic in Toronto, but teach Paramedicine part time as a college instructor. Therefore, so much of my knowledge stems from a science and research background.

I believe developmental evaluation can be applied to clinical trials also. Ongoing assessment of data as it is gathered is so important in a health care setting. There have been multiple human clinical trials where obvious positive results of treatment are seen much earlier than the original trial period duration. Do you think developmental evaluation applies here in the same context? Should we throw out the evaluation of “Does this drug treatment work?” for, “What is the dosage we should give for maximum benefit?”

Thanks for your thought!

Aaron