Greetings, all! We’re Edward Jackson, Anne Bichsel, Geske Dijkstra and Karin ter Horst, evaluation professionals who have recently evaluated Swiss and Dutch aid programs designed to strengthen national and local governance in developing countries.

Governance is everybody’s business. From the run-up to the 2016 US presidential election to the recent launch of the Global Development Goals, it is clear that governance matters.

But how should governance interventions be evaluated? Here are three tips from our work:

Hot Tips:

- Extend the knowledge base by building and testing new evaluation criteria.

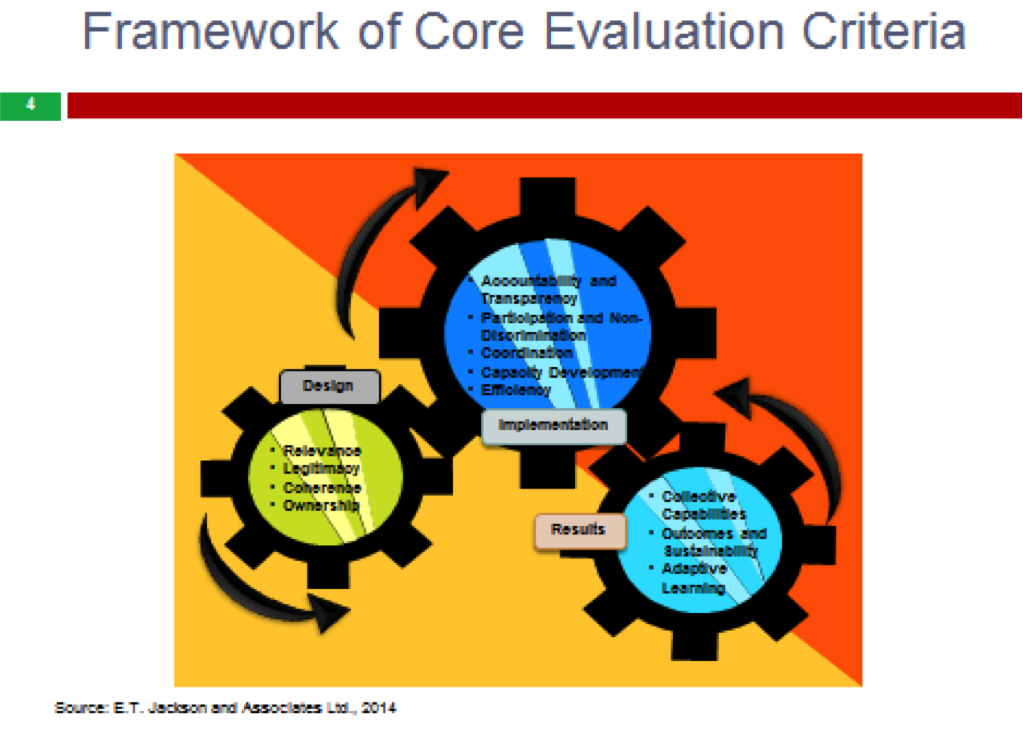

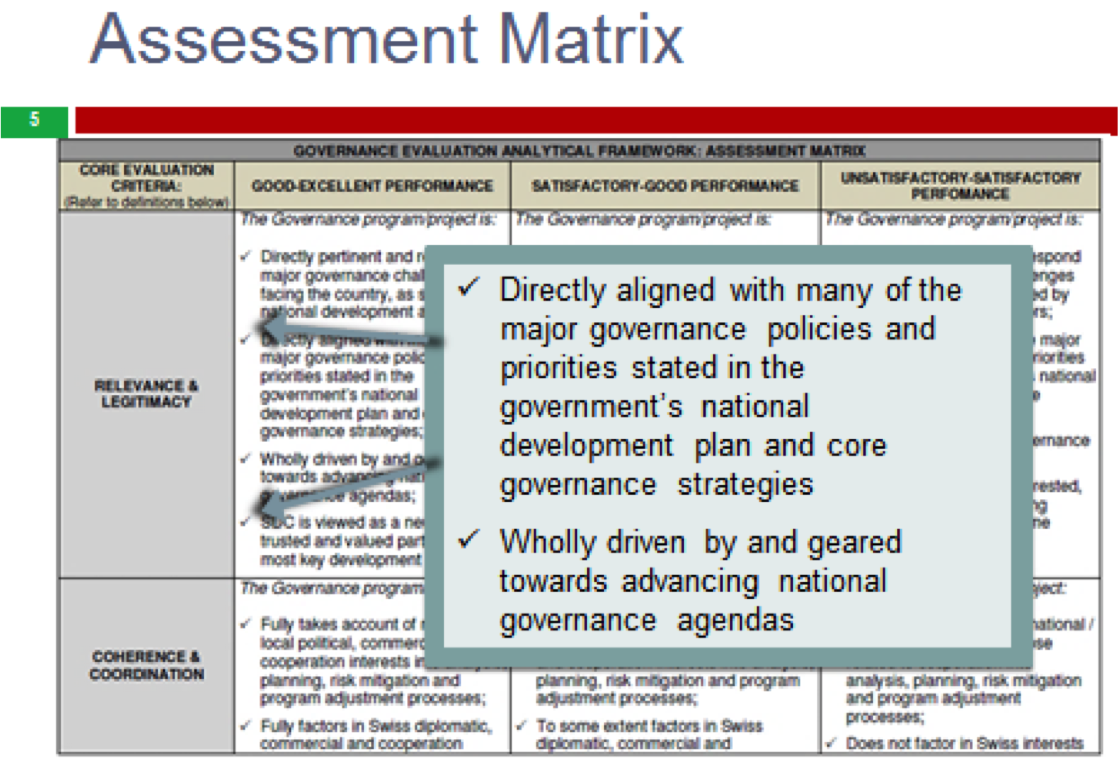

In our evaluation of the governance programming and mainstreaming of the Swiss Agency for Development and Cooperation (SDC), we constructed a matrix of evaluation criteria based on the Paris Declaration on Aid Effectiveness, SDC’s own guidelines, and recent research. International and local consultant teams had to agree on their rankings of individual interventions on this matrix. The new set of criteria enabled us to understand, for example, the importance of the roles of legitimacy and adaptive learning in effective governance programs.

2. Use mixed methods to examine both the “supply” and “demand” sides of governance.

Ideally, institutions supply policies and services, while citizens demand results and accountability. Building the capacities of both sides to work effectively, separately and with each other, is central to programming success. Evaluators must assess the performance of both supply and demand initiatives. In our study of the Dutch-supported Local Government Scorecard Initiative in Uganda, for example, we employed quantitative and qualitative methods to assess results in local councils (the supply side) and among citizens (the demand side). In Rwanda, we applied the techniques of fuzzy-set Qualitative Comparative Analysis (fsQCA) to examine Dutch-supported governance interventions.

3. Be alert to alternative pathways to good-governance outcomes and to unintended consequences.

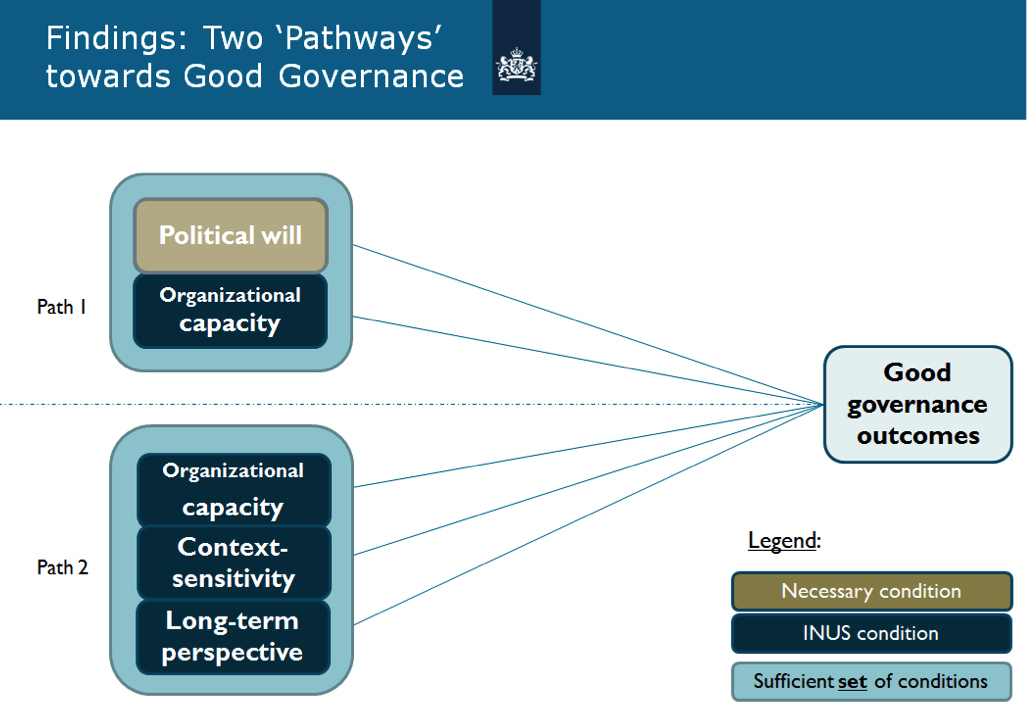

The Dutch Rwanda study revealed that there were two pathways to good governance in that experience: one driven by strong political will paired with sufficient organizational capacity of implementing partners, and a second path forged by interventions that were context-sensitive, took a long-term perspective, and were implemented by partners with sufficient organizational capacity. It also was clear that, regardless of the pathway, monitoring and evaluation must be well-integrated into the implementation process in order to optimize learning and results.

Source: Ter Horst, 2015

And be alert to unintended consequences. Case studies in the Swiss evaluation illustrated how central governments can instrumentalize decentralization in order to actually recentralize their power and exerting control over decisions at the local level, while ostensibly supporting decentralization. Donor agencies must be able to recognize such dynamics and adjust their programming accordingly.

Rad Resources: On our evaluations, see PPT, Report; On fsQCA, see QCA, PDF

Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org . aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

On their website is this pdf with annexes, is this it? See the Assessment Matrix starting on p. 295 of the pdf. https://ext.d-nsbp-p.admin.ch/NSBExterneStudien/560/attachment/en/2271.pdf

Yes, Joy. You have correctly found the annex with the matric.

Yes, thanks, Joy. The full matrix of evaluation criteria is indeed found in that link. It is Table 1 of Annex B. Best regards, Ted Jackson

Is the Assessment Matrix available somewhere publicly? I like to collect examples of evaluation rubrics, as they’re also known since they’re still relatively new.

Kylie, Thank you for your interest. See Joy’s comment above. That is the link to the annexes.