Hello to fellow evaluators around the world! We are Chhork Boeurng and Ihor Matviichuk, monitoring and evaluation managers for Pact. We work on two different projects located in Cambodia and Ukraine, both of which work to strengthen civic engagement.

As citizens of each of our project countries, we are acutely aware of the limited space there is for civil society, despite longstanding efforts to expand it. Similarly, it has been very difficult for us evaluators to measure the outcomes of our work. We have relied mostly on qualitative evidence, which provides a lot of the flexibility needed for evaluating in highly complex environments. While qualitative measures can provide wonderful nuance to concepts such as ‘civic engagement,’ we wanted to compare changes over time and across populations for a larger number of people, while accounting for the complex and politically sensitive dynamics that define our operating environments. For this reason, we both worked to incorporate more quantitative methods into our civic engagement evaluations: in Cambodia we developed an index and in Ukraine we used propensity score matching (PSM).

Hot tip: Indexes are great ways to measure complex concepts over time. Try adapting an existing tool to your context. Also, consider structuring the index along your theory of change (TOC). Our index has three ontological levels linked to our TOC. Measuring these simultaneously enables us to track change in a nonlinear fashion. We are using survey research to provide comparable index data over time.

Hot tip: Consider developing ‘archetypes’ that differentiate beneficiaries, such as by skills, education, and family context. Doing so can help projects customize and evaluate interventions. For example, in one of our projects we aim to support unique young women from a population of 350,000: the archetypes are helping us evaluate interventions by beneficiary characteristic, and our quantitative approach via survey research makes the analysis more manageable.

Lesson learned: Asking questions about civic engagement in contexts where there is little space for civil society and fear of repercussions can raise ethical issues and result in untruthful responses. It took us multiple rounds of testing to develop an index that is sensitive enough to garner accurate and safe responses. We also involved participants from the beginning.

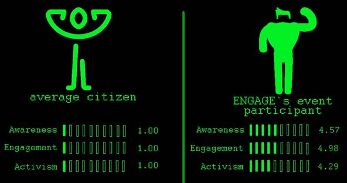

Cool Trick: Governance projects often work closely with a smaller number of individuals selected based on particular traits, making it difficult to draw larger conclusions. We wanted to understand whether our results were really due to our project and if results would be relevant to other populations. We used PSM. Despite the intimidating name, PSM is a relatively easy approach! We matched two groups using demographics and levels of civic engagement for comparison. We were able to demonstrate that project participants were four times more likely to be civically aware, engaged and active as compared to the average citizen. This has provided the evidence we needed to replicate and scale.

Lesson learned: You may already have the necessary data for advanced analysis, but you may need to identify the method that meets your needs. Give it a try!

The American Evaluation Association is celebrating DemTIG Week with our colleagues in the Democracy and Governance TIG. All of the blog contributions this week come from our DemTIG members. Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org. aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.