Hi! I’m Tara Gregory, Director of the Center for Applied Research and Evaluation (CARE) at Wichita State University. Like any evaluator, the staff of CARE are frequently tasked with figuring out what difference programs are making for those they serve. So, we tend to be really focused on outcomes and see outputs as the relatively easy part of evaluating programs. However, a recent experience reminded me not to overlook the importance of outputs when designing and, especially, communicating about evaluations.

In this instance, my team and I had designed what we thought was a really great evaluation that covered all the bases in a particularly artful manner – and I’m only being partially facetious. We thought we’d done a great job. But the response from program staff was “I just don’t think you’re measuring anything.” It finally occurred to us that our focus on outcomes in describing the evaluation had left out a piece of the picture that was particularly relevant for this client – the outputs or accountability measures that indicated programs were actually doing something. It wasn’t that we didn’t identify or plan to collect outputs. We just didn’t highlight how they fit in the overall evaluation.

Lesson Learned: While the toughest part of an evaluation is often figuring out how to measure outcomes, clients still need to know that their efforts are worth something in terms of the stuff that’s easy to count (e.g., number of people served, number of referrals, number of resources distributed, etc.). Although just delivering a service doesn’t necessarily mean it was effective, it’s still important to document and communicate the products of their efforts. Funders typically require outputs for accountability and the programs place value in the tangible evidence of their work.

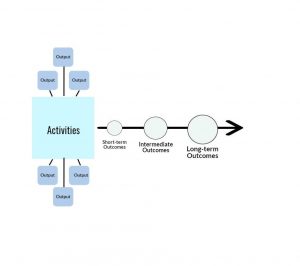

Cool Trick: In returning to the drawing board for a better way to communicate our evaluation plan, we created a graphic that focuses on the path to achieving outcomes with the outputs offset to show that they’re important, but not the end result of the program. In an actual logic model or evaluation plan, we’d name the activities, outputs and outcomes more specifically based on the program. But this graphic helps keep the elements in perspective.  The American Evaluation Association is celebrating Community Psychology TIG Week with our colleagues in the CP AEA Topical Interest Group. The contributions all this week to aea365 come from our CP TIG members. Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org. aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

The American Evaluation Association is celebrating Community Psychology TIG Week with our colleagues in the CP AEA Topical Interest Group. The contributions all this week to aea365 come from our CP TIG members. Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org. aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

Hi Tara,

Thanks so much for sharing your experience. I am in the middle of creating a program evaluation and I may be making the same error. I believe I am overlooking some outputs of the program. I now realize without this data it is not giving a full picture of the evaluation. Also, the outcome data for this particular program is difficult to gather. If the program doesn’t meet our predicted results, output data will allow us to have something to fall back on. This shows our accountability & other tangible evidence.

I also really liked the flow chart graphic you provided. It demonstrates a clear action plan for program evaluators to follow. I may incorporate this concept in the design of my own program evaluation.

I look forward to reading more of your work.

Kind regards,

Jessica

I love that graphic, Tara. I’m thinking of many times it would have been useful in conversations with program staff (including yesterday!) and I’m looking forward to using it in the future. Thank you so much for sharing!

Laurie