I’m Clara Hagens. I work for Catholic Relief Services (CRS) as the Regional Technical Advisor for Monitoring, Evaluation, Accountability and Learning (MEAL) in Asia. I’d like to share with you a resource pack we have developed to support teams to develop and implement monitoring and evaluation (M&E) systems throughout different phases of an emergency response.

Rad Resource: CRS’ Monitoring, Evaluation, Accountability and Learning in Emergencies: A Resource Pack for Simple and Strong MEAL provides guidance on the key principles of MEAL in emergencies and helps staff to design use-oriented M&E systems that collect just enough information to inform high quality and highly-responsive emergency programming. The Resource Pack clarifies what is different about M&E in an emergency versus a non-emergency setting, namely that in an emergency the M&E system must remain dynamic, that gathering the perspective of the most vulnerable groups is valued over rigorous or heavy data collection methods, that teams are responsible for collecting information on changes context, and that results must be used immediately and often daily for the response to continue to meet the community’s needs.

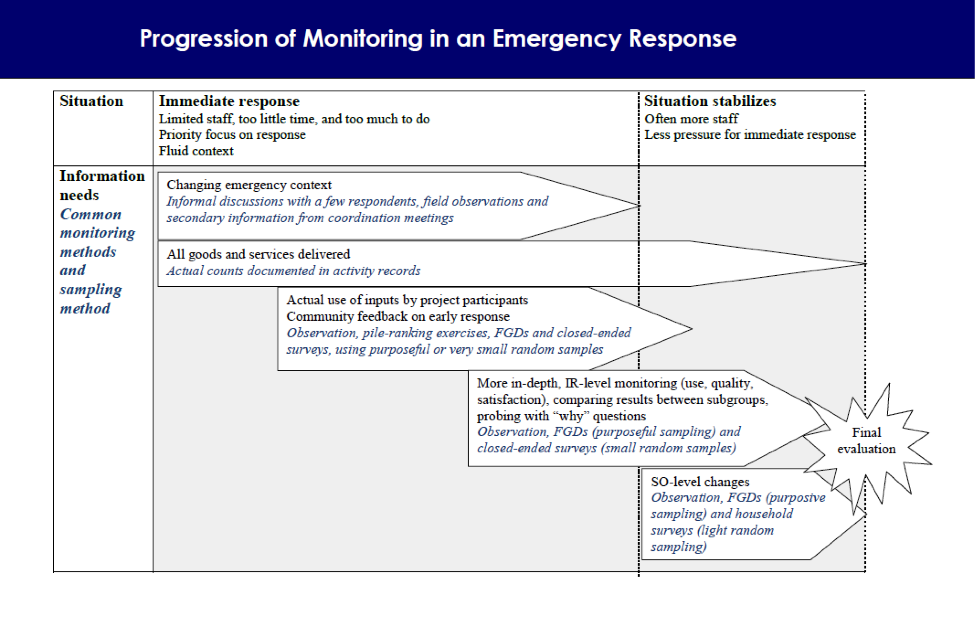

The Resource Pack demonstrates how both information needs and the appropriate mix of methods and respondents to meet those information needs evolves during an emergency response. Please see the figure below for a graphic depicting this evolution.

(Reprinted by permission from Dominique Morel and Clara Hagens, Monitoring, Evaluation, Accountability and Learning in Emergencies: A Resource Pack for Simple and Strong MEAL (Baltimore, MD: Catholic Relief Services, 2012), [page 14].)

The Resource Pack also emphasizes the importance of monitoring community satisfaction with the response and developing feedback mechanisms to increase accountability. Additional topics in the Resource Pack include sampling during data collection (both when and how to sample), conducting a daily debrief session, and selecting an appropriate mix of learning events at different points in the response.

Do you have questions, concerns, kudos, or content to extend this aea365 contribution? Please add them in the comments section for this post on the aea365 webpage so that we may enrich our community of practice. Would you like to submit an aea365 Tip? Please send a note of interest to aea365@eval.org . aea365 is sponsored by the American Evaluation Association and provides a Tip-a-Day by and for evaluators.

Clara, thanks for this post, especially this week when everyone is so focused on the Philippines Typhoon response. I think it’s critical that people stop and take time to think about MEAL, even in the midst of the chaos, to ensure our work is most effective. I hope you guys are re-posting, tweeting, etc. these tools as widely as possible! Thanks to CRS for their posts all week, great to see such robust participation!